452

Artificial intelligence end the human race?

We say that the robots rise up. To invade the world, take everything under control. For decades we have heard these warnings, and today, at the beginning of the 21st century, the number of these warnings is only growing. Growing fear of artificial intelligence that will lead to the end of our species.

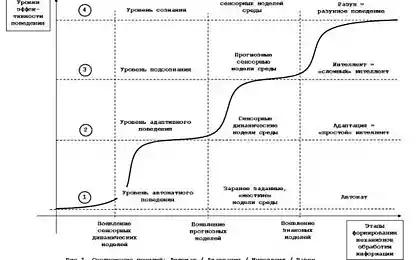

Such scenarios not only in great demand in Hollywood but increasingly find supporters in the scientific and philosophical field. For example, ray Kurzweil wrote that the exponential growth of artificial intelligence will lead to technological singularity, the point when machine intelligence will surpass human. Some see this as the end of the world, others see only opportunities. Nick Bostrom thinks that a superintelligence could help us solve the issues of disease, poverty and environmental destruction, as well as improve ourselves.

On Tuesday, the famous scientist Stephen Hawking joined the ranks of the prophets of the singularity, or rather to the pessimistic group, as stated by the BBC that "the development of full artificial intelligence could mean end of human race". He believes that people will not be able to compete with an AI that can reprogram itself and will reach far beyond the human. However, Hawking is likewise believes that we generally do not try to contact aliens because they will have only one goal: subjugate us or even annihilate.

The problem with these scenarios is not that they necessarily false — who can predict the future? or that you should not believe the scenarios of science fiction. The latter is unavoidable, if you want to understand and appreciate modern technology and its impact on us in the future. It is important to put on the table our philosophical questions in such scenarios and explore our fears, to find out what is most important to us.

Problem exceptional attention to artificial intelligence in the context of "doomsday" and other fatal scenarios that it distracts us from other more urgent and important ethical and social issues arising from technological developments in these areas. For example, there is a place of privacy in the world of information and communication technologies? Threaten any of Google, Facebook and Apple the freedom in the world of technology? Would further automation to reduce jobs? Can new financial technology to threaten the world economy? How mobile devices affect the environment? (To the credit of Hawking, he mentioned about privacy in an interview, but then still talking about the end of human century).

These issues much less attractive than a superintelligence or the end of mankind. They also raise questions about the intelligence or robots; they are about what kind of society we need and what we want to see your life.

These questions are ancient, they appeared with the advent of science and philosophy, and the growing information technology today, that change our world, force you to think again about them. Let's hope that the best human minds of our time will focus most of his energy on finding answers to the right questions, and not demagoguery around exaggerated threats.

Source: hi-news.ru