816

Artificial intelligence: extinction or immortality?

Ninety nine million seventy four thousand six hundred forty

Here is the second part of the article from the "Wait, how can all this be true, why still do not talk about it on every corner". To the people of the planet Earth is gradually creeping up the intelligence explosion, he's trying to evolve from highly directional to the human intellect, and finally, artificial superintelligence.

"Perhaps, before us lies an extremely difficult problem, and it is unknown how much time her decision, but her decision may depend the future of humanity". — Nick Bostrom.The first part of the article started innocently enough. We discussed artificial narrow intelligence (UII, which specializiruetsya on solving one specific task like the definition of routes or playing chess), in our world its much. Then I analyzed why it was so difficult to grow from UII obstaravanie artificial intelligence (AIS, or AI that intellectual capacities can be compared with a human solution to any problem). We came to the conclusion that the exponential pace of technological progress implying that they might appear pretty soon. In the end we decided that once the machines reach human intelligence levels, can occur following:

As usual, we look at the screen, not believing that artificial superintelligence (ISI, which is much smarter than any human) may appear in our lifetime, and choosing the emotion that best reflects our opinion on this issue.

Before we delve into the features of ISI, let's remind ourselves what it means for a machine to be Superintelligent.

The main difference is between fast superintelligence, and quality superintelligence. Often the first thing that comes to mind when thinking about the supermind computer is that it can think much faster than human in millions of times faster, and five minutes to comprehend what the man would take ten years. ("I know kung fu!")

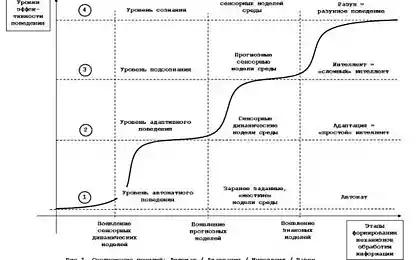

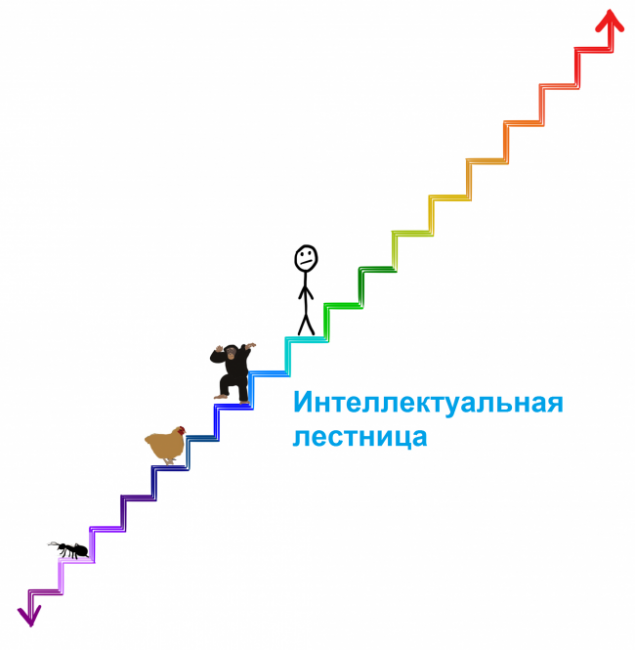

Sounds impressive, and the IRS really ought to think faster than any of the people — but the boundary will be the quality of his intellect, and it is quite another. People are much smarter than monkeys, not because the uptake is faster, but because people's brains contain a number of clever cognitive modules that perform complex linguistic representation, long term planning, abstract thinking that monkeys are not capable. If you overclock the monkey brain a thousand times smarter than us it would not — even after ten years, it will not be able to collect the designer the instructions of what person would take a couple of hours max. There are things that a monkey will never learn, regardless of how many hours you can spend, or how quickly will work her brain.

In addition, the monkey can not humanly, because her brain is unable to comprehend the existence of other worlds — the monkey can know what is man and what is a skyscraper, but never realize that the skyscraper was built by people. In her world everything belongs to nature, and monkey not only can build a skyscraper, but understand that it can do any build. And this is the result of a small difference in the quality of intelligence.

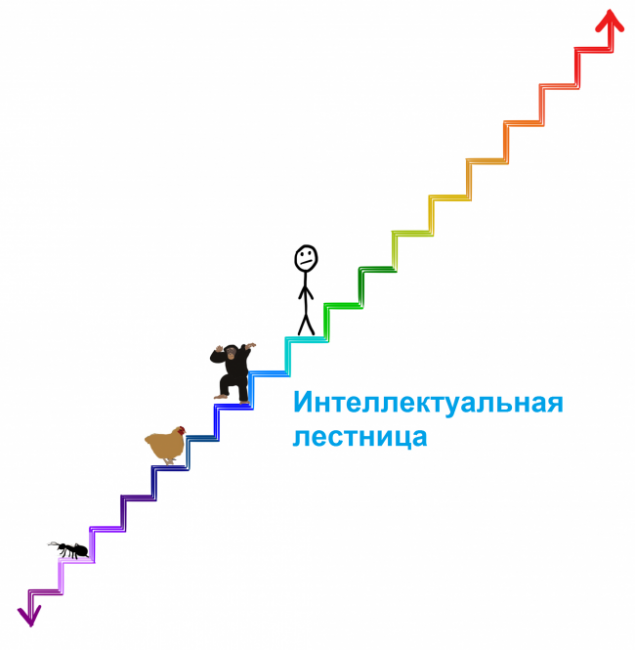

In the overall scheme of intelligence of which we speak, or just by the standards of biological beings, the difference in the quality of intelligence of humans and monkeys, tiny. In the previous article we have placed biological cognitive abilities on the ladder:

To understand how severe Superintelligent machine, place it two steps higher than the man on the ladder. This machine can be Superintelligent quite a bit, but its superiority over our cognitive abilities is the same as ours — over monkey. And like a chimp will never comprehend that a skyscraper can be built, we may never understand what understand the machine a couple of steps higher, even if the machine will try to explain it to us. But it's only a couple of steps. The car is smarter than will see us as ants — it will be for years to teach us the simplest from its position of things, and these attempts are hopeless.

The type of superintelligence, which we will discuss today, is far beyond these stairs. This intelligence explosion — when it becomes smarter than the machine, the faster it can increase its own intelligence, gradually increasing speed. This car can take years to surpass chimps in intelligence, but perhaps a couple of hours to surpass us in a couple of steps. Since then, the car may have to jump through four steps every second. That is why we should understand that very soon after appear the first news that the car has reached the level of human intelligence, we can face the reality of coexistence on Earth with something that will be far above us on the ladder (and maybe millions of times above):

And once we have established that it is quite useless to try to understand the power of the machine, which is only two steps above us, let's define once and for all, that there is no way to understand what will make the IRS and what will be the consequences for us. Anyone who claims the opposite, simply does not understand that the meaning of the superintelligence.

Evolution slowly and gradually developed a biological brain for hundreds of millions of years, and if people build a machine with superintelligence, in a sense, we'll beat evolution. Or will it be part of evolution, perhaps the evolution and applies that intelligence develops gradually until it reaches a tipping point, heralding a new future for all living beings:

For reasons that we will discuss later, a huge portion of the scientific community believes that the question is not whether we get to this turning point, and when.

Where will we be then?

I think no one in this world, neither I, nor you, can say what will happen when we'll reach a tipping point. Oxford philosopher and leading theorist of AI Nick Bostrom believes that we can reduce all the possible outcomes to two large categories.

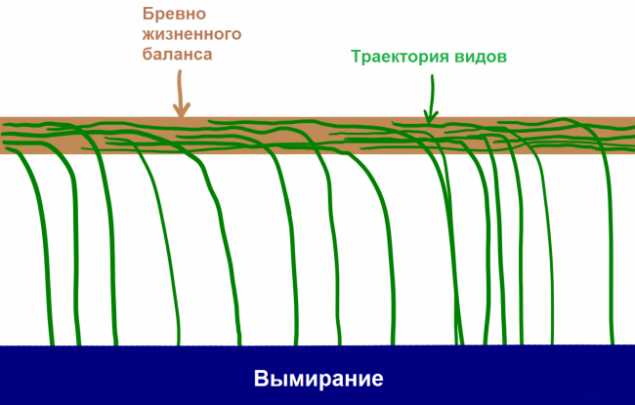

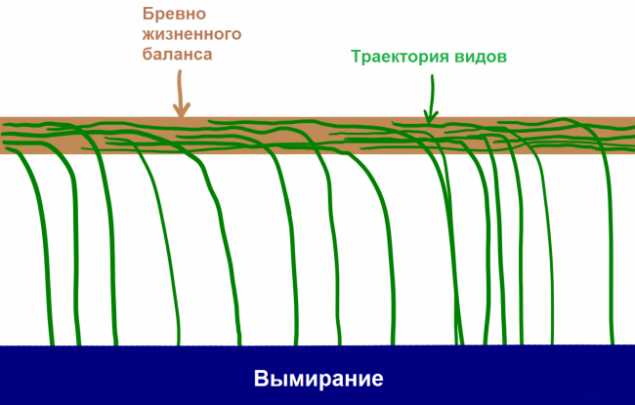

First, looking at history, we know about the lives of the following: species appear, exist for a certain time, and then inevitably fall from the logs life balance and die out.

"All die out" has been a reliable rule of history, as "all men ever to die." 99.9% of the species fell from life logs, and it is clear that if some kind of rests on that beam for too long, a gust of natural wind or sudden asteroid will turn this log. Bostrom calls the extinction of the state of the attractor, where all types of balance not to fall to where not returned yet none of.

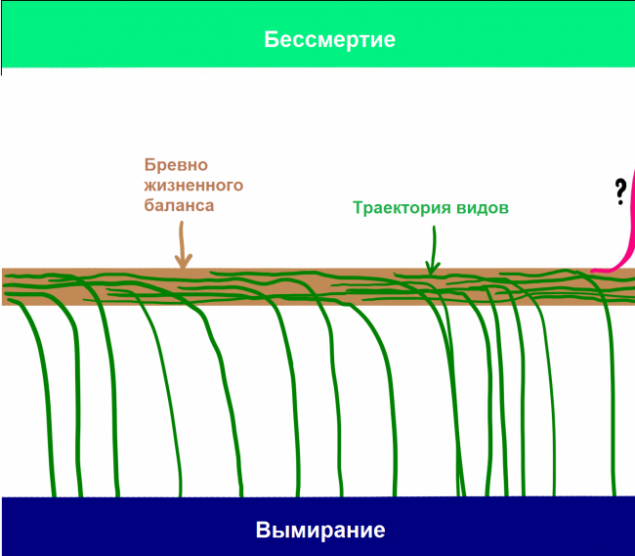

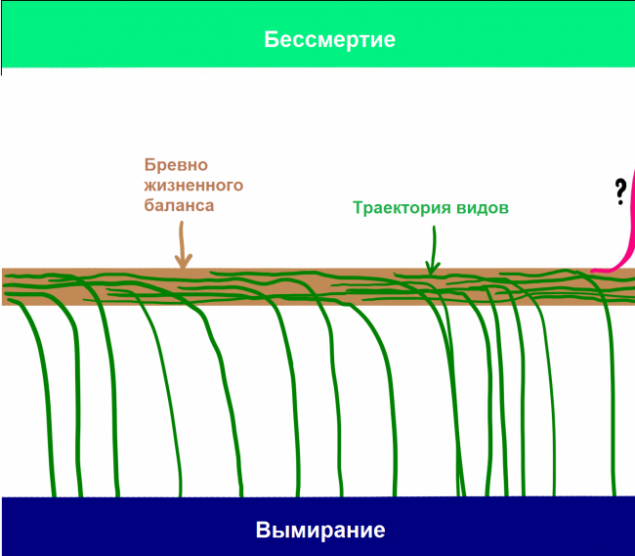

Although most scientists recognize that the CII will be able to condemn humans to extinction, many also believe that using the capabilities of IRS allow individuals (and species in General) to achieve the second condition of the attractor species of immortality. Bostrom believes that immortality is kind of the same attractor as the extinction of species, that is, if we get to that, we are doomed to a perpetual existence. Thus, even if all kinds to the current day fell from the pole into the pool of extinction, Bostrom believes that in the logs there are two sides, and not just appeared on the Earth so intelligence, which understand how to fall on the other side.

If Bostrom and others are right, and, judging from all the information available to us, they may be, we need to make two very shocking fact:

The emergence of ISI for the first time in history, will open the possibility for a species to achieve immortality and to drop out of the fatal cycle of extinction. The emergence of ISI will have so unimaginably huge impact that is likely to push humanity from this beam in one direction or another. It is possible that when the evolution reaches a tipping point, she always puts an end to the relationship of people with the flow of life and creates a new world, with people or without.

Hence, one interesting question that only the lazy would not have asked: when we get to this turning point and where it determine? Nobody in the world knows the answer to this double question, but a lot of smart people for decades trying to understand it. The remainder of this article we will find out where they came from.

Let's start with the first part of this question: when we have to reach a tipping point? In other words: how much time is left until then, until the first machine reaches superintelligence?

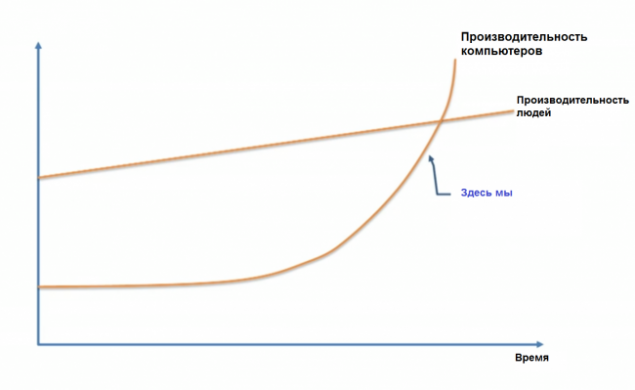

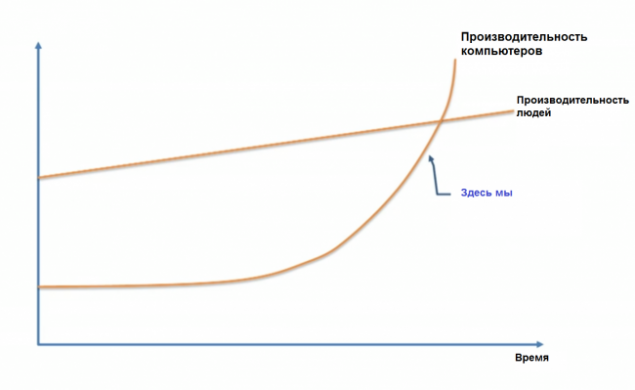

Opinions vary from case to case. Many, including Professor Vernor Vinge, a scientist Ben Hertzel, co-founder of Sun Microsystems bill joy, futurist ray Kurzweil, agreed with the expert in the field of machine learning, Jeremy Howard, when he presented a TED Talk on the following schedule:

These people share the opinion that CII will appear soon — this exponential growth, which today seems to us slow, will literally explode in the next few decades.

Others like Microsoft co-founder Paul Allen, a research psychologist Gary Marcus, a computer expert Ernest Davis and technoprogressives Mitch Kapor believe that thinkers like Kurzweil seriously underestimate the scale of the problem, and I think that we are not so close to a tipping point.

Camp Kurzweil argues that the only underestimation that occurs is ignoring exponential growth, and you can compare the doubters to those who looked at the slowly burgeoning Internet in 1985 and argued that he would not have impact on the world in the near future.

The "doubters" can parry, they say that progress is harder to do each subsequent step, when it comes to the exponential development of intelligence, which eliminates the typical exponential nature of technological progress. And so on.

The third camp, which is Nick Bostrom, do not agree neither with the first nor with the second, arguing that a) all this absolutely can happen in the near future; and b) there is no guarantee that it will happen at all or will require more time.

Others, like the philosopher Hubert Dreyfus believe that all these three groups naively believe that the tipping point at all, and also that, most likely, we'll never get to ISI.

What happens when we put all these opinions together?

In 2013, Bostrom conducted a survey, which interviewed hundreds of experts in the field of artificial intelligence during several conferences on the following subject: "What's your prognosis for the achievement of human-level AIS?" and asked to call an optimistic year (in which we will have AIS with a 10 percent chance), a realistic assumption (the year in which we have a 50% chance they will) and the confident assumption (the earliest year in which they will appear with a 90 percent probability). Here are the results:

A separate study conducted recently by James Barratt (author of the acclaimed and very good book "Our final invention", excerpts from which I have presented to attention of readers Hi-News.ru) and Ben Herzl at the annual conference on AIS, AGI Conference, just showed people's opinions regarding the year in which we will get to the AIS: by 2030, 2050, 2100, later, or never. Here are the results:

But AIS is not a turning point, as the ISI. When, in the opinion of experts, we will have ISI?

Bostrom interviewed experts, when we reach the IRS: a) two years after the achievements of the AIS (almost instantly due to the explosion of intelligence); b) after 30 years. Results?

The average opinion has been formed so that the rapid transition from AIS to the IRS will happen with 10% probability, but in 30 years or less it will occur with 75% probability.

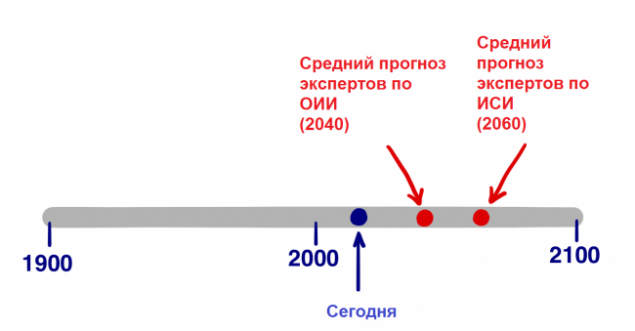

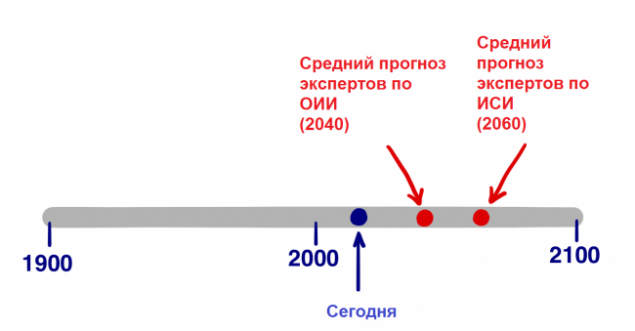

From these data we do not know which date respondents would be called a 50-percent chance of occurrence of ISI, but based on the two answers above, let's assume that is 20 years. That is the world's leading experts from the field of AI believe that the tipping point will come in 2060 (they will appear in 2040 + will need about 20 years for the transition from AIS to the IRS).

Of course, all the above statistics are speculative and merely represent the opinion of experts in the field of artificial intelligence, but they also indicate that the most interested people agree that by 2060, the ISI, was supposed to arrive. In just 45 years.

Let's move on to the second question. When we reach the tipping point, which side the fatal choice we define it?

A superintelligence will have a powerful force, and the critical question for us is:

Who or what will control this power and what will be his motivation?

The answer to this question will depend on will receive CII incredibly powerful development immeasurably terrifying development, or something between these two options.

Of course, the community of experts trying to answer these questions. The survey Bostrom analyzed the likelihood of possible consequences of influence of AIS on humanity and found that 52 percent chance it will go well with a 31 percent chance everything will be either bad or very bad. The survey attached at the end of the previous part of this topic conducted among you, dear readers, Hi-News, showed approximately the same results. For a relatively neutral outcome, the probability was only 17%. In other words, we all believe that the advent of AIS will be the greatest event. It is also worth noting that this survey relates to the emergence of AIS — in the case of ISI, the percentage of neutrality will be lower.

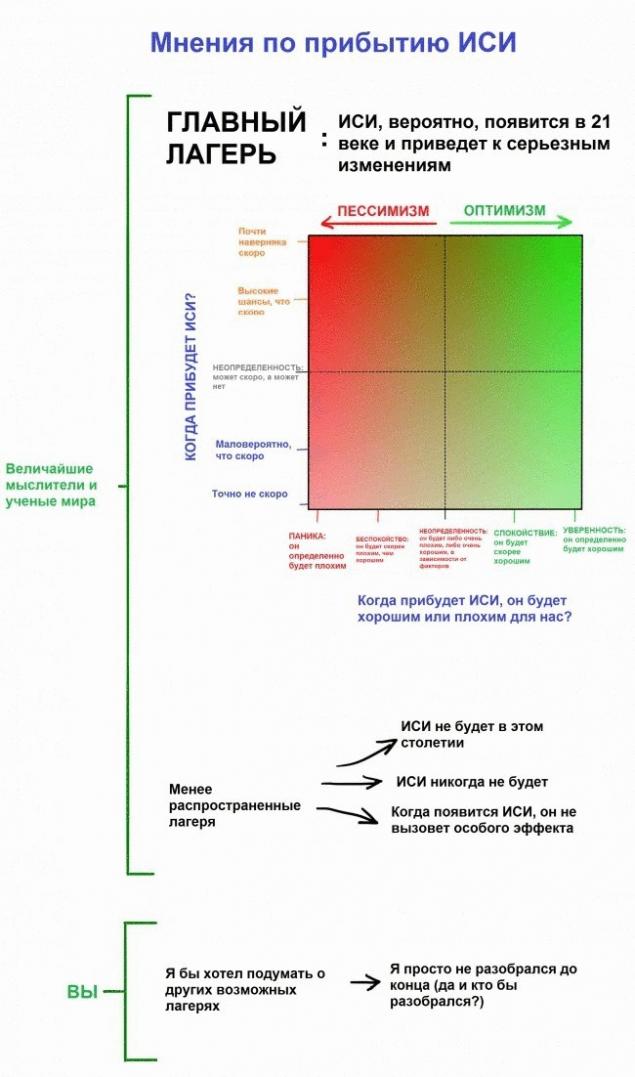

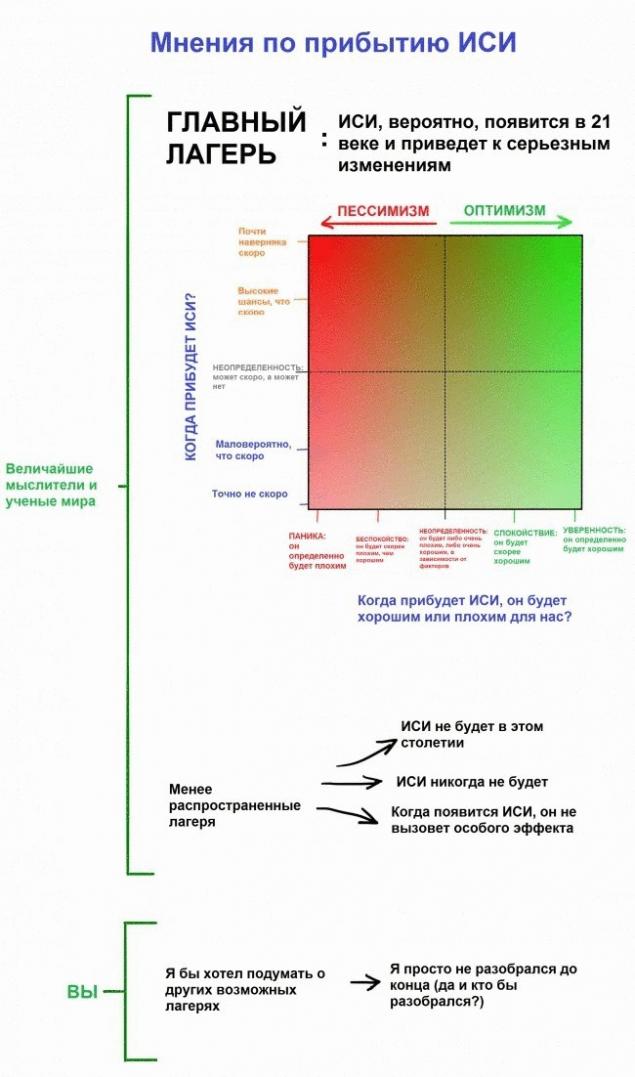

Before we get into further arguments about the good and bad sides of the issue, let's combine both parts of the question — "when will this happen?" and "is this good or bad?" in table, which covers the views of most experts.

On main camp, we'll talk in a minute, but first determine their position. Most likely you are not in the same place and I before I started this topic. There are several reasons for which people do not think on this subject:

In the course of research it becomes obvious that the views of most people quickly go to the side of the "main camp" and three quarters of the experts fall into two camps in the main camp.

We will visit both of these camps. Let's start with the fun stuff.

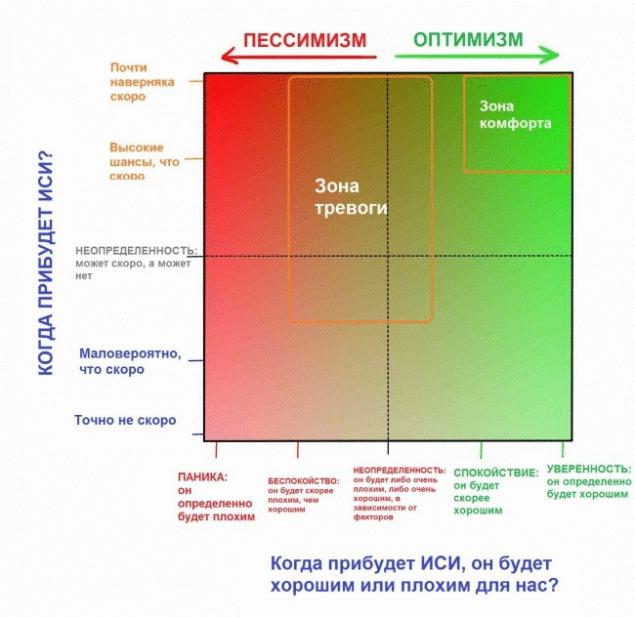

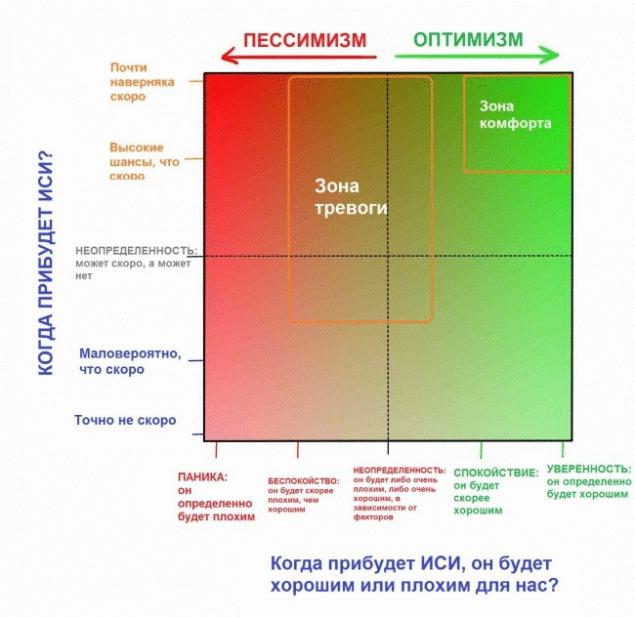

Why the future might be our greatest dream?As we explore the world of AI, we discover a surprising number of people in the comfort zone. People in the upper right quadrant are buzzing with excitement. They believe that we fall on the good side of the log and are also confident that we inevitably come to this. For them, the future is nothing but the best, what can only dream of.

The point which distinguishes these people from other thinkers is not that they want to be on the happy side — and the fact that they believe that we are waiting for it.

This confidence comes from the debate. Critics believe that it comes from the dazzling excitement that overshadows the potential negative side. But supporters say the dire predictions are always naive; technology continues and will always help us more than harm.

You may choose any of these views, but put aside skepticism and take a good look at the happy side of a log balance, trying to accept the fact that everything you read might have already occurred. If you showed hunter-gatherers our world of comfort, technology and endless abundance, it would seem magical fiction — and we are behaving quite modestly, unable to prevent that same unfathomable transformation awaits us in the future.

Nick Bostrom describes three ways in which can go a Superintelligent artificial intelligence system:

Eliezer Yudkowsky, an American specialist in artificial intelligence, good point:

"Complex problems do not exist, only problems that are complex to a certain level of intelligence. Go to the step above (in terms of intelligence), and some problems suddenly one of those "impossible" will go to the camp of the "obvious". Another notch — and they all will become apparent".There are a lot of impatient scientists, inventors and entrepreneurs who are at our table chose a coverage area of comfort, but to walk for the best in this best of all possible worlds we only need one guide.

Ray Kurzweil invokes mixed feelings. Some idolize his ideas, some despise. Some are kept in the middle — Douglas Hofstadter, discussing the ideas of Kurzweil's books, eloquently remarked that, "it's as if you took lots of good food and some dog poop, and then mix everything so that it is impossible to understand what is good and what is bad."

Whether you like his ideas or not, it is impossible to pass past them without a shadow of interest. He began inventing things as a teenager, and in later years invented several important things, including the first flatbed scanner, the first scanner that converts text to speech, well-known music synthesizer Kurzweil (the first true electric piano), as well as the first commercially successful speech Recognizer. He is also the author of five acclaimed books. Kurzweil's appreciate for his bold predictions and his "track record" is very good in the late 80's, when the Internet was still in its infancy, he suggested that for 2000 years the Network will become a global phenomenon. The Wall Street Journal has called Kurzweil "the restless genius", Forbes "global thinking machine", Inc. Magazine — "the rightful heir to Edison", bill gates — "the best of those who predicts the future of artificial intelligence". In 2012, Google co-founder Larry page invited Kurzweil to the post of technical Director. In 2011 he co-founded Singularity University, which is sheltered by NASA and which is partly sponsored by Google.

His biography is important. When Kurzweil talks about his vision for the future, it sounds crazy, but really crazy about this is that he is not crazy — he's incredibly smart, educated and sensible person. You can assume that he is mistaken in the forecasts, but he's not stupid. Kurzweil's predictions are shared by many experts "comfort zone", Peter Diamandis and Ben Hertzel. This is what will happen, in his opinion.

Chronological believes that computers will reach the level of General artificial intelligence (AIS) by 2029, and by 2045 we will not only be artificial superintelligence, but a whole new world — the so-called singularity. His chronology of AI is still considered to be outrageous exaggerations, but for the last 15 years, the rapid development of systems focused artificial intelligence (UII) has led many experts to go on the side of Kurzweil. His predictions are still more ambitious than in the survey Bostrom (AIS, by 2040, to 2060 CII), but not by much.

For Kurzweil to the singularity in 2045 lead three simultaneous revolutions in biotechnology, nanotechnology and, more importantly, the AI. But before we continue — and nanotechnology is closely followed artificial intelligence — let's take a minute to nanotechnology.

A few words about nanotechnologynanotechnology we usually refer to technologies that deal with manipulation of matter in the range of 1-100 nanometers. A nanometer is one billionth of a meter or one-millionth part of a millimeter; in the range of 1-100 nanometers can fit viruses (100 nm in diameter), DNA (10 nm wide), a hemoglobin molecule (5 nm), glucose (1 nm), and more. If nanotechnology ever become subject to us, the next step will be the manipulation of individual atoms that are least one order of magnitude (~,1 nm).

In order to understand where people face problems in trying to manipulate matter on such a scale, let's move on larger scale. The international space station is 481 kilometers above the Earth. If people were giants and head touched the ISS, they would be 250,000 times more than now. If you increase something from 1 to 100 nanometers to 250 000 times, you will receive 2.5 centimeters. Nanotechnology is the equivalent of a human height from the orbit of the ISS, which tries to control things as a grain of sand or eyeball. To get to the next level — the management of individual atoms — the giant will have to carefully position objects with a diameter of a 1/40 of a millimeter. Ordinary people will need a microscope to see them.

For the first time spoke about nanotechnology Richard Feynman in 1959. Then he said, "the Principles of physics, as far as I can judge, do not speak against the possibility to control things atom by atom. In principle, a physicist could synthesize any chemical substance recorded by the chemist. How? Placing atoms where said chemist to get stuff". That's the simplicity. If you know how to move individual molecules or atoms, you can almost everything.

Nanotechnology has become a serious scientific field in 1986, when engineer Eric Drexler introduced them to the basics in his seminal book "engines of creation", Drexler but he believes that those who want to learn more about current ideas in the field of nanotechnology, should read his book 2013 Full flush (Radical Abundance).

A few words about "gray goo" Delve into nanotechnology. In particular, the theme of "gray goo" — one of the most enjoyable themes in the field of nanotechnology, which not to say. In older versions of the theory of nanotechnology was proposed a method of nunobiki, including the creation of trillions of tiny nanobots that will work together, creating something. One of the ways to create trillions of nanobots to create one that can reproduce itself, i.e., from one to two, from two to four and so on. The day will be several trillion nanorobots. Such is the power of exponential growth. Funny, isn't it?It's funny, but only until, yet will not lead to the Apocalypse. The problem is that the power of exponential growth, which makes it quite a convenient way to quickly create a trillion nanobots, makes cameralocation terrible thing in the long term. What if the system zaglyuchit, and instead of having to stop replication on a pair of trillion, the nanobots will continue to be fruitful? What if this whole process depends on the carbon? The biomass of the Earth has 10^45 atoms of carbon. Nanobots have to be of the order of 10^6 atoms of carbon, so 10^39 nanobots devour all life on Earth, and it will happen in just 130 replications. Ocean nanobots (gray goo) will flood the planet. Scientists think that the nanobots will be able to replicate in 100 seconds, this means that a simple mistake can kill all life on Earth in just 3.5 hours.It could be worse — if to nanotechnology will reach the hands of terrorists and adversely-minded professionals. They could create a few trillion nanobots and program them to quietly spread around the world a couple of weeks. Then, one push of a button, in just 90 minutes they'll eat all with no chances.Although this horror story was widely discussed for many years, the good news is that this is just a horror story. Eric Drexler, who coined the term "grey goo", recently said: "People love horror stories, and this is included in the category of horror stories about zombies. This idea in itself already eats brains".Once we get to the bottom of nanotechnology, we will be able to use them to create technical devices, clothing, food, and bioproducts — blood cells, fighters against viruses and cancer, muscle tissues, etc. — anything. And in a world that uses nanotechnology, the material cost will no longer be tied to the deficit or the complexity of its manufacturing process, but rather to the complexity of the atomic structure. In the world of nanotechnology, the diamond can be a cheaper eraser.

We are not nearly there. And so, we underestimate or overestimate the difficulty of this path. However, everything goes to the fact that nanotechnology is not far off. Kurzweil suggests that by 2020 th year we will have children. World countries know that nanotechnology can promise a big future and therefore are investing in them many billions.

Just imagine what powers will be Superintelligent computer, if you get to a reliable nanoscale assembler. But nanotechnology is our idea, and we're trying to ride it, it is difficult for us. What if for the system of the IRS, they are just a joke, and the IRS will come up with technology that will be significantly more powerful than everything we do, in principle, can assume? We agreed: no one can imagine what will be capable of artificial superintelligence? It is believed that our brains are unable to predict even the minimum of what will be.

What AI could do for us?

Armed with superintelligence and all the technology which might create a superintelligence, the IRS will probably solve all the problems of humanity. Global warming? ISI first stop carbon dioxide emissions, making up a lot of effective ways of energy production, non-fossil fuels. Then he will come up with an effective innovative way of removing excess CO2 from the atmosphere. Cancer and other diseases? Not a problem — health care and medicine will change so that it is impossible to imagine. World hunger? ISI will use nanotechnology to create meat that is identical to the natural, from scratch, real meat.

Nanotechnology can turn a pile of garbage in a VAT of fresh meat or other food (not necessarily even in a familiar form — imagine a giant Apple cube) and distribute all this food around the world, using advanced systems of transportation. Of course, this would be great for animals who no longer have to die for food. ISI can also do many other things like saving endangered species or even extinct return on stored DNA. ISI can solve our most difficult macroeconomic problems — our most complex economic debates, ethics and philosophy, world trade — all this will be painfully obvious to the IRS.

But there is something special that ISI could do for us. Alluring and tantalizing, that would have changed everything: the ISI can help us cope with mortality. Gradually learning the possibilities of AI, perhaps you will revise all your ideas about death.

Evolution had no reason to prolong our life expectancy is longer than it is now. If we live long enough to give birth and raise children until they can fend for themselves, the evolution of this enough. From an evolutionary point of view, 30+ years to develop enough, and there is no reason for mutations to extend life and reduce the value of natural selection. William Butler Yeats called our "soul fastened to a dying animal". Not very fun.

And since all we ever die, we live with the idea that death is inevitable. We think of aging with time continuing to move forward and not being able to stop this process. But the thought of death is treacherous: it occupied, we forget to live. Richard Feynman wrote:

"Biology is a wonderful thing: in this science there is nothing that would speak about the necessity of death. If we want to create a perpetual motion machine, we understand that we have discovered enough laws in physics, which either indicates the impossibility of this or that incorrect laws. But in biology there is nothing that would indicate the inevitability of death. This leads me to believe that it is not so inevitable and only a matter of time before biologists discover the cause of this problem, this terrible universal disease, it will be cured."The fact that aging has nothing to do with time. Aging is that the physical materials of the body wear out. Parts are degraded — but is it aging is inevitable? If you will repair the car as parts wear, it will work forever. The human body is no different — just more complex.

Kurzweil speaks of the reasonable, connected to Wi-Fi nanobots in the bloodstream, which could perform countless tasks for human health, including the regular repair or replacement of worn-out cells in any part of the body. If you improve this process (or to find an alternative to the proposed smarter CII), it will not only keep the body healthy, it can reverse the aging process. The difference between the body of 60-year-old and 30-year-old is a handful of physical points that could be corrected with the right technology. ISI could build a machine where people would come in 60-year-old, and went 30-year-old.

Even deteriorating brain could use an upgrade. ISI certainly knew how to do it without affecting the data of the brain (personality, memories, etc.). 90-year-old man suffering from complete degradation of the brain could be retrained, updated, and return to beginning their life career. It may seem absurd, but the body is a handful of atoms, and the IRS certainly could easily manipulate any atomic structure. It's not so absurd.

Kurzweil also believes that the artificial material will integrate into the body more and more as the movement time. For a start, the authorities could be replaced overproduce native versions that would work forever and never failed. Then we could have a complete redesign of the body to replace red blood cells perfect nanobots that would move independently without the need in your heart at all. We could also improve our cognitive abilities, to start thinking in the billions faster and get access to all available human information through the cloud.

Opportunities for attainment of new horizons would be truly limitless. People were able to give sex a new assignment, they do it for fun and not just for reproduction. Kurzweil believes that we can do the same with food. The nanobots could deliver the perfect nutrition directly to the cells of the body, allowing unhealthy substances through the body through.

Nanotechnology theorist Robert Freitas.published

Source: hi-news.ru

Here is the second part of the article from the "Wait, how can all this be true, why still do not talk about it on every corner". To the people of the planet Earth is gradually creeping up the intelligence explosion, he's trying to evolve from highly directional to the human intellect, and finally, artificial superintelligence.

"Perhaps, before us lies an extremely difficult problem, and it is unknown how much time her decision, but her decision may depend the future of humanity". — Nick Bostrom.The first part of the article started innocently enough. We discussed artificial narrow intelligence (UII, which specializiruetsya on solving one specific task like the definition of routes or playing chess), in our world its much. Then I analyzed why it was so difficult to grow from UII obstaravanie artificial intelligence (AIS, or AI that intellectual capacities can be compared with a human solution to any problem). We came to the conclusion that the exponential pace of technological progress implying that they might appear pretty soon. In the end we decided that once the machines reach human intelligence levels, can occur following:

As usual, we look at the screen, not believing that artificial superintelligence (ISI, which is much smarter than any human) may appear in our lifetime, and choosing the emotion that best reflects our opinion on this issue.

Before we delve into the features of ISI, let's remind ourselves what it means for a machine to be Superintelligent.

The main difference is between fast superintelligence, and quality superintelligence. Often the first thing that comes to mind when thinking about the supermind computer is that it can think much faster than human in millions of times faster, and five minutes to comprehend what the man would take ten years. ("I know kung fu!")

Sounds impressive, and the IRS really ought to think faster than any of the people — but the boundary will be the quality of his intellect, and it is quite another. People are much smarter than monkeys, not because the uptake is faster, but because people's brains contain a number of clever cognitive modules that perform complex linguistic representation, long term planning, abstract thinking that monkeys are not capable. If you overclock the monkey brain a thousand times smarter than us it would not — even after ten years, it will not be able to collect the designer the instructions of what person would take a couple of hours max. There are things that a monkey will never learn, regardless of how many hours you can spend, or how quickly will work her brain.

In addition, the monkey can not humanly, because her brain is unable to comprehend the existence of other worlds — the monkey can know what is man and what is a skyscraper, but never realize that the skyscraper was built by people. In her world everything belongs to nature, and monkey not only can build a skyscraper, but understand that it can do any build. And this is the result of a small difference in the quality of intelligence.

In the overall scheme of intelligence of which we speak, or just by the standards of biological beings, the difference in the quality of intelligence of humans and monkeys, tiny. In the previous article we have placed biological cognitive abilities on the ladder:

To understand how severe Superintelligent machine, place it two steps higher than the man on the ladder. This machine can be Superintelligent quite a bit, but its superiority over our cognitive abilities is the same as ours — over monkey. And like a chimp will never comprehend that a skyscraper can be built, we may never understand what understand the machine a couple of steps higher, even if the machine will try to explain it to us. But it's only a couple of steps. The car is smarter than will see us as ants — it will be for years to teach us the simplest from its position of things, and these attempts are hopeless.

The type of superintelligence, which we will discuss today, is far beyond these stairs. This intelligence explosion — when it becomes smarter than the machine, the faster it can increase its own intelligence, gradually increasing speed. This car can take years to surpass chimps in intelligence, but perhaps a couple of hours to surpass us in a couple of steps. Since then, the car may have to jump through four steps every second. That is why we should understand that very soon after appear the first news that the car has reached the level of human intelligence, we can face the reality of coexistence on Earth with something that will be far above us on the ladder (and maybe millions of times above):

And once we have established that it is quite useless to try to understand the power of the machine, which is only two steps above us, let's define once and for all, that there is no way to understand what will make the IRS and what will be the consequences for us. Anyone who claims the opposite, simply does not understand that the meaning of the superintelligence.

Evolution slowly and gradually developed a biological brain for hundreds of millions of years, and if people build a machine with superintelligence, in a sense, we'll beat evolution. Or will it be part of evolution, perhaps the evolution and applies that intelligence develops gradually until it reaches a tipping point, heralding a new future for all living beings:

For reasons that we will discuss later, a huge portion of the scientific community believes that the question is not whether we get to this turning point, and when.

Where will we be then?

I think no one in this world, neither I, nor you, can say what will happen when we'll reach a tipping point. Oxford philosopher and leading theorist of AI Nick Bostrom believes that we can reduce all the possible outcomes to two large categories.

First, looking at history, we know about the lives of the following: species appear, exist for a certain time, and then inevitably fall from the logs life balance and die out.

"All die out" has been a reliable rule of history, as "all men ever to die." 99.9% of the species fell from life logs, and it is clear that if some kind of rests on that beam for too long, a gust of natural wind or sudden asteroid will turn this log. Bostrom calls the extinction of the state of the attractor, where all types of balance not to fall to where not returned yet none of.

Although most scientists recognize that the CII will be able to condemn humans to extinction, many also believe that using the capabilities of IRS allow individuals (and species in General) to achieve the second condition of the attractor species of immortality. Bostrom believes that immortality is kind of the same attractor as the extinction of species, that is, if we get to that, we are doomed to a perpetual existence. Thus, even if all kinds to the current day fell from the pole into the pool of extinction, Bostrom believes that in the logs there are two sides, and not just appeared on the Earth so intelligence, which understand how to fall on the other side.

If Bostrom and others are right, and, judging from all the information available to us, they may be, we need to make two very shocking fact:

The emergence of ISI for the first time in history, will open the possibility for a species to achieve immortality and to drop out of the fatal cycle of extinction. The emergence of ISI will have so unimaginably huge impact that is likely to push humanity from this beam in one direction or another. It is possible that when the evolution reaches a tipping point, she always puts an end to the relationship of people with the flow of life and creates a new world, with people or without.

Hence, one interesting question that only the lazy would not have asked: when we get to this turning point and where it determine? Nobody in the world knows the answer to this double question, but a lot of smart people for decades trying to understand it. The remainder of this article we will find out where they came from.

Let's start with the first part of this question: when we have to reach a tipping point? In other words: how much time is left until then, until the first machine reaches superintelligence?

Opinions vary from case to case. Many, including Professor Vernor Vinge, a scientist Ben Hertzel, co-founder of Sun Microsystems bill joy, futurist ray Kurzweil, agreed with the expert in the field of machine learning, Jeremy Howard, when he presented a TED Talk on the following schedule:

These people share the opinion that CII will appear soon — this exponential growth, which today seems to us slow, will literally explode in the next few decades.

Others like Microsoft co-founder Paul Allen, a research psychologist Gary Marcus, a computer expert Ernest Davis and technoprogressives Mitch Kapor believe that thinkers like Kurzweil seriously underestimate the scale of the problem, and I think that we are not so close to a tipping point.

Camp Kurzweil argues that the only underestimation that occurs is ignoring exponential growth, and you can compare the doubters to those who looked at the slowly burgeoning Internet in 1985 and argued that he would not have impact on the world in the near future.

The "doubters" can parry, they say that progress is harder to do each subsequent step, when it comes to the exponential development of intelligence, which eliminates the typical exponential nature of technological progress. And so on.

The third camp, which is Nick Bostrom, do not agree neither with the first nor with the second, arguing that a) all this absolutely can happen in the near future; and b) there is no guarantee that it will happen at all or will require more time.

Others, like the philosopher Hubert Dreyfus believe that all these three groups naively believe that the tipping point at all, and also that, most likely, we'll never get to ISI.

What happens when we put all these opinions together?

In 2013, Bostrom conducted a survey, which interviewed hundreds of experts in the field of artificial intelligence during several conferences on the following subject: "What's your prognosis for the achievement of human-level AIS?" and asked to call an optimistic year (in which we will have AIS with a 10 percent chance), a realistic assumption (the year in which we have a 50% chance they will) and the confident assumption (the earliest year in which they will appear with a 90 percent probability). Here are the results:

- Optimistic average year (10%): 2022

- Average realistic year (50%): 2040

- Average pessimistic year (90%): 2075

A separate study conducted recently by James Barratt (author of the acclaimed and very good book "Our final invention", excerpts from which I have presented to attention of readers Hi-News.ru) and Ben Herzl at the annual conference on AIS, AGI Conference, just showed people's opinions regarding the year in which we will get to the AIS: by 2030, 2050, 2100, later, or never. Here are the results:

- 2030: 42% of respondents

- By 2050: 25%

- By 2100: 20%

- After 2100: 10%

- Never: 2%

But AIS is not a turning point, as the ISI. When, in the opinion of experts, we will have ISI?

Bostrom interviewed experts, when we reach the IRS: a) two years after the achievements of the AIS (almost instantly due to the explosion of intelligence); b) after 30 years. Results?

The average opinion has been formed so that the rapid transition from AIS to the IRS will happen with 10% probability, but in 30 years or less it will occur with 75% probability.

From these data we do not know which date respondents would be called a 50-percent chance of occurrence of ISI, but based on the two answers above, let's assume that is 20 years. That is the world's leading experts from the field of AI believe that the tipping point will come in 2060 (they will appear in 2040 + will need about 20 years for the transition from AIS to the IRS).

Of course, all the above statistics are speculative and merely represent the opinion of experts in the field of artificial intelligence, but they also indicate that the most interested people agree that by 2060, the ISI, was supposed to arrive. In just 45 years.

Let's move on to the second question. When we reach the tipping point, which side the fatal choice we define it?

A superintelligence will have a powerful force, and the critical question for us is:

Who or what will control this power and what will be his motivation?

The answer to this question will depend on will receive CII incredibly powerful development immeasurably terrifying development, or something between these two options.

Of course, the community of experts trying to answer these questions. The survey Bostrom analyzed the likelihood of possible consequences of influence of AIS on humanity and found that 52 percent chance it will go well with a 31 percent chance everything will be either bad or very bad. The survey attached at the end of the previous part of this topic conducted among you, dear readers, Hi-News, showed approximately the same results. For a relatively neutral outcome, the probability was only 17%. In other words, we all believe that the advent of AIS will be the greatest event. It is also worth noting that this survey relates to the emergence of AIS — in the case of ISI, the percentage of neutrality will be lower.

Before we get into further arguments about the good and bad sides of the issue, let's combine both parts of the question — "when will this happen?" and "is this good or bad?" in table, which covers the views of most experts.

On main camp, we'll talk in a minute, but first determine their position. Most likely you are not in the same place and I before I started this topic. There are several reasons for which people do not think on this subject:

- As mentioned in the first part, the movies have seriously confused people and facts, presenting unrealistic scenarios with artificial intelligence, which has led to the fact that we do not have to take AI seriously. James Barratt likened this situation to how if the Centers for disease control issued a serious warning about vampires in our future.

- Because of the so-called cognitive biases are very difficult for us to believe in the reality of something until we have evidence. We can confidently present the computer scientists of 1988, which discussed the far-reaching consequences of the emergence of the Internet and what it could become, but the people hardly believed that he will change their lives until that happened. Computers are just not able to do this in 1988, and people just looked at their computers and think, "Seriously? This is something that will change the world?". Their imagination was limited to what they have been taught personal experience, they know what a computer is, and it was hard to imagine what the computer will be capable of in the future. The same thing is happening with AI. We heard that he will be a great thing, but because until I met him face to face and generally observed a rather weak manifestation of AI in our modern world, we pretty hard to believe he will radically change our lives. It is against these biases are numerous experts from all camps, as well as interested people: are trying to get our attention through the noise of everyday collective self-centeredness.

- Even if we believed in all this — how many times today you thought about the fact that I will spend the rest of eternity in nothingness? A bit, agree. Even if this fact is much more important what you do from day to day. All because our brains usually focus on small everyday things, regardless of how crazy it will be a long-term situation in which we find ourselves. We just arranged.

In the course of research it becomes obvious that the views of most people quickly go to the side of the "main camp" and three quarters of the experts fall into two camps in the main camp.

We will visit both of these camps. Let's start with the fun stuff.

Why the future might be our greatest dream?As we explore the world of AI, we discover a surprising number of people in the comfort zone. People in the upper right quadrant are buzzing with excitement. They believe that we fall on the good side of the log and are also confident that we inevitably come to this. For them, the future is nothing but the best, what can only dream of.

The point which distinguishes these people from other thinkers is not that they want to be on the happy side — and the fact that they believe that we are waiting for it.

This confidence comes from the debate. Critics believe that it comes from the dazzling excitement that overshadows the potential negative side. But supporters say the dire predictions are always naive; technology continues and will always help us more than harm.

You may choose any of these views, but put aside skepticism and take a good look at the happy side of a log balance, trying to accept the fact that everything you read might have already occurred. If you showed hunter-gatherers our world of comfort, technology and endless abundance, it would seem magical fiction — and we are behaving quite modestly, unable to prevent that same unfathomable transformation awaits us in the future.

Nick Bostrom describes three ways in which can go a Superintelligent artificial intelligence system:

- The Oracle, who can answer any precise question, including the difficult questions that people cannot answer — for example, "how to make a car engine more efficient?". Google is a primitive "Oracle".

- The Genie can fulfill any high-level team uses the molecular assembler to create a new, more efficient version of the car engine and waits for the next command.

- The sovereign, who will have broad access and the ability to function freely in the world, their own decision-making and improving the process. He'll invent a cheaper, fast and safe way to private transportation than a car.

Eliezer Yudkowsky, an American specialist in artificial intelligence, good point:

"Complex problems do not exist, only problems that are complex to a certain level of intelligence. Go to the step above (in terms of intelligence), and some problems suddenly one of those "impossible" will go to the camp of the "obvious". Another notch — and they all will become apparent".There are a lot of impatient scientists, inventors and entrepreneurs who are at our table chose a coverage area of comfort, but to walk for the best in this best of all possible worlds we only need one guide.

Ray Kurzweil invokes mixed feelings. Some idolize his ideas, some despise. Some are kept in the middle — Douglas Hofstadter, discussing the ideas of Kurzweil's books, eloquently remarked that, "it's as if you took lots of good food and some dog poop, and then mix everything so that it is impossible to understand what is good and what is bad."

Whether you like his ideas or not, it is impossible to pass past them without a shadow of interest. He began inventing things as a teenager, and in later years invented several important things, including the first flatbed scanner, the first scanner that converts text to speech, well-known music synthesizer Kurzweil (the first true electric piano), as well as the first commercially successful speech Recognizer. He is also the author of five acclaimed books. Kurzweil's appreciate for his bold predictions and his "track record" is very good in the late 80's, when the Internet was still in its infancy, he suggested that for 2000 years the Network will become a global phenomenon. The Wall Street Journal has called Kurzweil "the restless genius", Forbes "global thinking machine", Inc. Magazine — "the rightful heir to Edison", bill gates — "the best of those who predicts the future of artificial intelligence". In 2012, Google co-founder Larry page invited Kurzweil to the post of technical Director. In 2011 he co-founded Singularity University, which is sheltered by NASA and which is partly sponsored by Google.

His biography is important. When Kurzweil talks about his vision for the future, it sounds crazy, but really crazy about this is that he is not crazy — he's incredibly smart, educated and sensible person. You can assume that he is mistaken in the forecasts, but he's not stupid. Kurzweil's predictions are shared by many experts "comfort zone", Peter Diamandis and Ben Hertzel. This is what will happen, in his opinion.

Chronological believes that computers will reach the level of General artificial intelligence (AIS) by 2029, and by 2045 we will not only be artificial superintelligence, but a whole new world — the so-called singularity. His chronology of AI is still considered to be outrageous exaggerations, but for the last 15 years, the rapid development of systems focused artificial intelligence (UII) has led many experts to go on the side of Kurzweil. His predictions are still more ambitious than in the survey Bostrom (AIS, by 2040, to 2060 CII), but not by much.

For Kurzweil to the singularity in 2045 lead three simultaneous revolutions in biotechnology, nanotechnology and, more importantly, the AI. But before we continue — and nanotechnology is closely followed artificial intelligence — let's take a minute to nanotechnology.

A few words about nanotechnologynanotechnology we usually refer to technologies that deal with manipulation of matter in the range of 1-100 nanometers. A nanometer is one billionth of a meter or one-millionth part of a millimeter; in the range of 1-100 nanometers can fit viruses (100 nm in diameter), DNA (10 nm wide), a hemoglobin molecule (5 nm), glucose (1 nm), and more. If nanotechnology ever become subject to us, the next step will be the manipulation of individual atoms that are least one order of magnitude (~,1 nm).

In order to understand where people face problems in trying to manipulate matter on such a scale, let's move on larger scale. The international space station is 481 kilometers above the Earth. If people were giants and head touched the ISS, they would be 250,000 times more than now. If you increase something from 1 to 100 nanometers to 250 000 times, you will receive 2.5 centimeters. Nanotechnology is the equivalent of a human height from the orbit of the ISS, which tries to control things as a grain of sand or eyeball. To get to the next level — the management of individual atoms — the giant will have to carefully position objects with a diameter of a 1/40 of a millimeter. Ordinary people will need a microscope to see them.

For the first time spoke about nanotechnology Richard Feynman in 1959. Then he said, "the Principles of physics, as far as I can judge, do not speak against the possibility to control things atom by atom. In principle, a physicist could synthesize any chemical substance recorded by the chemist. How? Placing atoms where said chemist to get stuff". That's the simplicity. If you know how to move individual molecules or atoms, you can almost everything.

Nanotechnology has become a serious scientific field in 1986, when engineer Eric Drexler introduced them to the basics in his seminal book "engines of creation", Drexler but he believes that those who want to learn more about current ideas in the field of nanotechnology, should read his book 2013 Full flush (Radical Abundance).

A few words about "gray goo" Delve into nanotechnology. In particular, the theme of "gray goo" — one of the most enjoyable themes in the field of nanotechnology, which not to say. In older versions of the theory of nanotechnology was proposed a method of nunobiki, including the creation of trillions of tiny nanobots that will work together, creating something. One of the ways to create trillions of nanobots to create one that can reproduce itself, i.e., from one to two, from two to four and so on. The day will be several trillion nanorobots. Such is the power of exponential growth. Funny, isn't it?It's funny, but only until, yet will not lead to the Apocalypse. The problem is that the power of exponential growth, which makes it quite a convenient way to quickly create a trillion nanobots, makes cameralocation terrible thing in the long term. What if the system zaglyuchit, and instead of having to stop replication on a pair of trillion, the nanobots will continue to be fruitful? What if this whole process depends on the carbon? The biomass of the Earth has 10^45 atoms of carbon. Nanobots have to be of the order of 10^6 atoms of carbon, so 10^39 nanobots devour all life on Earth, and it will happen in just 130 replications. Ocean nanobots (gray goo) will flood the planet. Scientists think that the nanobots will be able to replicate in 100 seconds, this means that a simple mistake can kill all life on Earth in just 3.5 hours.It could be worse — if to nanotechnology will reach the hands of terrorists and adversely-minded professionals. They could create a few trillion nanobots and program them to quietly spread around the world a couple of weeks. Then, one push of a button, in just 90 minutes they'll eat all with no chances.Although this horror story was widely discussed for many years, the good news is that this is just a horror story. Eric Drexler, who coined the term "grey goo", recently said: "People love horror stories, and this is included in the category of horror stories about zombies. This idea in itself already eats brains".Once we get to the bottom of nanotechnology, we will be able to use them to create technical devices, clothing, food, and bioproducts — blood cells, fighters against viruses and cancer, muscle tissues, etc. — anything. And in a world that uses nanotechnology, the material cost will no longer be tied to the deficit or the complexity of its manufacturing process, but rather to the complexity of the atomic structure. In the world of nanotechnology, the diamond can be a cheaper eraser.

We are not nearly there. And so, we underestimate or overestimate the difficulty of this path. However, everything goes to the fact that nanotechnology is not far off. Kurzweil suggests that by 2020 th year we will have children. World countries know that nanotechnology can promise a big future and therefore are investing in them many billions.

Just imagine what powers will be Superintelligent computer, if you get to a reliable nanoscale assembler. But nanotechnology is our idea, and we're trying to ride it, it is difficult for us. What if for the system of the IRS, they are just a joke, and the IRS will come up with technology that will be significantly more powerful than everything we do, in principle, can assume? We agreed: no one can imagine what will be capable of artificial superintelligence? It is believed that our brains are unable to predict even the minimum of what will be.

What AI could do for us?

Armed with superintelligence and all the technology which might create a superintelligence, the IRS will probably solve all the problems of humanity. Global warming? ISI first stop carbon dioxide emissions, making up a lot of effective ways of energy production, non-fossil fuels. Then he will come up with an effective innovative way of removing excess CO2 from the atmosphere. Cancer and other diseases? Not a problem — health care and medicine will change so that it is impossible to imagine. World hunger? ISI will use nanotechnology to create meat that is identical to the natural, from scratch, real meat.

Nanotechnology can turn a pile of garbage in a VAT of fresh meat or other food (not necessarily even in a familiar form — imagine a giant Apple cube) and distribute all this food around the world, using advanced systems of transportation. Of course, this would be great for animals who no longer have to die for food. ISI can also do many other things like saving endangered species or even extinct return on stored DNA. ISI can solve our most difficult macroeconomic problems — our most complex economic debates, ethics and philosophy, world trade — all this will be painfully obvious to the IRS.

But there is something special that ISI could do for us. Alluring and tantalizing, that would have changed everything: the ISI can help us cope with mortality. Gradually learning the possibilities of AI, perhaps you will revise all your ideas about death.

Evolution had no reason to prolong our life expectancy is longer than it is now. If we live long enough to give birth and raise children until they can fend for themselves, the evolution of this enough. From an evolutionary point of view, 30+ years to develop enough, and there is no reason for mutations to extend life and reduce the value of natural selection. William Butler Yeats called our "soul fastened to a dying animal". Not very fun.

And since all we ever die, we live with the idea that death is inevitable. We think of aging with time continuing to move forward and not being able to stop this process. But the thought of death is treacherous: it occupied, we forget to live. Richard Feynman wrote:

"Biology is a wonderful thing: in this science there is nothing that would speak about the necessity of death. If we want to create a perpetual motion machine, we understand that we have discovered enough laws in physics, which either indicates the impossibility of this or that incorrect laws. But in biology there is nothing that would indicate the inevitability of death. This leads me to believe that it is not so inevitable and only a matter of time before biologists discover the cause of this problem, this terrible universal disease, it will be cured."The fact that aging has nothing to do with time. Aging is that the physical materials of the body wear out. Parts are degraded — but is it aging is inevitable? If you will repair the car as parts wear, it will work forever. The human body is no different — just more complex.

Kurzweil speaks of the reasonable, connected to Wi-Fi nanobots in the bloodstream, which could perform countless tasks for human health, including the regular repair or replacement of worn-out cells in any part of the body. If you improve this process (or to find an alternative to the proposed smarter CII), it will not only keep the body healthy, it can reverse the aging process. The difference between the body of 60-year-old and 30-year-old is a handful of physical points that could be corrected with the right technology. ISI could build a machine where people would come in 60-year-old, and went 30-year-old.

Even deteriorating brain could use an upgrade. ISI certainly knew how to do it without affecting the data of the brain (personality, memories, etc.). 90-year-old man suffering from complete degradation of the brain could be retrained, updated, and return to beginning their life career. It may seem absurd, but the body is a handful of atoms, and the IRS certainly could easily manipulate any atomic structure. It's not so absurd.

Kurzweil also believes that the artificial material will integrate into the body more and more as the movement time. For a start, the authorities could be replaced overproduce native versions that would work forever and never failed. Then we could have a complete redesign of the body to replace red blood cells perfect nanobots that would move independently without the need in your heart at all. We could also improve our cognitive abilities, to start thinking in the billions faster and get access to all available human information through the cloud.

Opportunities for attainment of new horizons would be truly limitless. People were able to give sex a new assignment, they do it for fun and not just for reproduction. Kurzweil believes that we can do the same with food. The nanobots could deliver the perfect nutrition directly to the cells of the body, allowing unhealthy substances through the body through.

Nanotechnology theorist Robert Freitas.published

Source: hi-news.ru